When I worked at Citrix one of my now colleagues at NVIDIA (Jason Southern) came to us with a proposal and PoC (you can read the blogs about that experiment, here) for us to implement templates to assist users to configure and optimize HDX graphics for XenDesktop and XenApp (Template details – here). XenDesktop/XenApp 7.6 FP3 saw the release of these templates which allowed users to configure their graphics for the specific needs of their users (user experience vs. server scalability) and network conditions (WAN/limited bandwidth etc.).

When I worked at Citrix one of my now colleagues at NVIDIA (Jason Southern) came to us with a proposal and PoC (you can read the blogs about that experiment, here) for us to implement templates to assist users to configure and optimize HDX graphics for XenDesktop and XenApp (Template details – here). XenDesktop/XenApp 7.6 FP3 saw the release of these templates which allowed users to configure their graphics for the specific needs of their users (user experience vs. server scalability) and network conditions (WAN/limited bandwidth etc.).

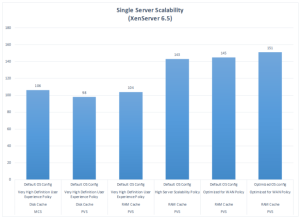

It’s great to see the impact these templates have had and how users have found them generally useful. I was pleased to see a former colleague (Daniel Feller) publish some benchmark results he’d done on his own blog, here. Moreover Daniel added additional information demonstrating that optimizing the OS itself (in that case Windows 7) could help the user claw back even more scalability improvements.

I do however feel that for those unfamiliar a little more information might be helpful for them to understand Daniel’s results.

These results are Windows 7, Windows 7 is a _legacy_ OS

In his results Daniel referred to his use of the Citrix Policies: Very High Definition vs High Server Scalability vs Optimized for WAN; and also referred to Marcel Calef’s excellent whitepaper describing them, which you can find here: http://support.citrix.com/article/CTX202330. If you delve into this whitepaper you will find TWO policies for both “High Server Scalability” and “Optimized for WAN”. These are “Optimized for WAN” and “Optimized for WAN-Legacy OS”. If you read the details in http://support.citrix.com/article/CTX202330 you will find:

- Optimized for WAN-Legacy OS. This Optimized for WAN template applies only to VDAs running Server 2008 R2 or Windows 7 and earlier. This template relies on the Legacy graphics mode which is more efficient for those operating systems.

It isn’t clear to me from the results which Daniel used, I’d be very interested to see a comparison though involving both the legacy and non-legacy policies. Although he mentions:

- Why did we see such a gain when moving to the High Server Scalability policy? Because the Very High Definition User Experience policy utilizes the H.264 codec, which gives the user a great experience, but at the cost of CPU utilization. When we switch to the High Server Scalability policy, we utilize the latest ThinWire technology. We are able to reduce CPU utilization while increasing server density by changing the way the system encodes the data.

So why two policies?

There are two parts to the graphics system:

- The OS (in this case windows 7) and how it offers up graphics to the protocol.

- The protocol and its ability to receive what the OS and its graphics stack supplied.

The original thinwire technologies were highly optimized for the graphics stack Windows 7 and server 2008 R2, the operating systems. This original thinwire mode was renamed “legacy graphics mode” to indicate it was for legacy _operating systems_. This however I think does imply that the recent thinwire+/thinwire compatibility modes are in some way always better and that the legacy graphics mode is in some way less good and has been enhanced.

Between Window 7 and Windows 8, and between Server 2008 R2 and server 2012 R2. Microsoft made radical changes to the graphics stack that meant Windows 8 was no longer churning out the graphics in a format the legacy graphics mode was designed to receive. At this point at Citrix we wrote the thinwire+/thinwire compatibility mode as a protocol to be optimized for what those newer operating systems were now supplying. We did try to ensure it was optimized as best as possible for the data from the legacy OSs (Win7/2008 R2) but in some areas that was limited.

So if Daniel is using the “Optimized for WAN” rather the “Optimized for WAN-Legacy OS”, his results probably don’t show the optimal configuration for some users.

What to expect with Windows 7 – if using “Optimized for WAN-Legacy OS” vs. “Optimized for WAN-Legacy OS” or “High Server Scalability” vs “High Server Scalability-Legacy OS”.

Daniel used “Each test was conducted utilizing the same, knowledge worker workload”; I expect this was something like a LoginVSI workload which runs a series of applications and user interactions that become increasingly complex over the test run. Something like opening a series of email inboxes, typing, scrolling, followed by a bit of editing a word documenting, changing tabs; then some more compex web browsing; perhaps a powerpoint with transitions and then a game and some videos; with increasingly intensive graphics workloads.

The users most like to see a significant difference on Windows7, are probably those with applications that fall into the simpler and earlier parts of something like a LoginVSI workload. Those using “legacy” applications (these are those which contain code optimised for the GDI like stack of Windows 7 that Microsoft removed in Windows 8) – such as Microsoft Office and similar (application that have evolved and been around for a while; or those with very simple, bespoke specialist applications designed for specific tasks.

There are plenty of industries where the average user and application is not using a standard knowledge worker set of office apps but a strange collection of commodity applications that are graphically very simple – this is fairly common in call centres, finance and healthcare. There are a lot of apps that are just designed to enter patient/customer information or financial data that make the Excel UI look jazzy! Some of these apps can look similar to a DOS window.

Why you might choose the new thinwire+/thinwire compatibility over legacy mode

Legacy mode because of the technologies involved has to be implemented per host. I’d expect Windows 8 to perform with legacy graphics mode worse than Windows 7 does with thinwire+/thinwire compatibility. So if you have to run a mix of OSs including some Windows 8 and above the non-legacy policies may be the best choice.

The thinwire (legacy) and thinwire+/compatibility protocols/modes should be expected to show different RAM footprints though, which will vary between different OSs too. These protocols also have different caching implementations so there will be subtle differences. The thinwire technologies set qualities up front too and so careful attention needs to be paid not just to metrics like CPU/frame rates and bandwidth but also to the quality of what a user actually receives for that resource.

Will it make a huge difference using the “Legacy OS” templates to Daniel’s results?

Probably not as the proportion of GDI optimised applications in an average office desktop is probably not hugely significant factor. However on a legacy server OS, 2018 R2 and lower I’d expect legacy to perform better than thinwire+ in many scenarios. Partly because a server OS with XenApp is more likely to have a large number of users and the RAM footprint differences may become more apparent. Additionally those simpler and GDI friendly legacy apps are more likely to be deployed via XenApp.

“High Server Scalability” vs. “Optimized for WAN”

Looking at Daniel’s results it becomes apparent the Optimized for WAN can bump your server scalability a little, however users should be aware that this is at a cost by implementing additional compression to reduce bandwidth (this changes the CPU footprint a little too). In Marcel’s whitepaper you will find details (http://support.citrix.com/article/CTX202330) including of how an additional color depth policy is implemented. This “color depth for simple graphics” is an implementation of ECC/chromatic sub-sampling and on more graphical applications can leave visual artefacts a user may complain about. Many simpler apps are unaffected though. So the administrator really need to look not just at the scalability demonstrated in a benchmark but also whether their users are likely to see and complain about artefacts (I’ve written another blog which includes images showing those artefacts on more visual applications – here).

A Good Job

Daniel’s done a very good job and clearly understand the technologies but I felt a little more info might help for those less-experienced. I particulalr like that Daniel has investigated sclability for both Hyper-V and XenServer which shows there is very little difference between the hypervisors (a question users often ask but which there isn’t much information on). Unfortunately I suspect VMware’s licensing probably would prohibit him as a Citrix employee publishing comparisons with vSPhere/ESXi so administrators may need to simply follow his methodology for themselves to investigate that one. By putting out the figures for Hyper-V and XenServer though it’s provided those designing deployments a lot of reference data to use to calibrate their own testing.

great post rachel. i need to update the win 7 blog as we were using the legacy policies for windows 7.

and you are correct, we have vmware numbers but I’m not allowed to publish due to their licensing.

LikeLike

I wasn’t sure… I’d be really interested to see how much difference it makes – i suspect thinwire+ will still be reasonably good, and there maybe some folk using it because run win7 + win 8 mixed on hosts….

LikeLike